-

Leverkusen sink Hamburg to keep in touch with top four

Leverkusen sink Hamburg to keep in touch with top four

-

Love match: WTA No. 1 Sabalenka announces engagement

-

Man City falter as Premier League leaders Arsenal go seven points clear

Man City falter as Premier League leaders Arsenal go seven points clear

-

Man City title bid rocked by Forest draw

-

Defending champ Draper ready to ramp up return at Indian Wells

Defending champ Draper ready to ramp up return at Indian Wells

-

Arsenal extend lead in title race after Saka sinks Brighton

-

US, European stocks rise as oil prices steady; Asian indexes tumble

US, European stocks rise as oil prices steady; Asian indexes tumble

-

Trump rates Iran war as '15 out of 10'

-

Nepal votes in key post-uprising polls

Nepal votes in key post-uprising polls

-

US Fed warns 'economic uncertainty' weighing on consumers

-

Florida family sues Google after AI chatbot allegedly coached suicide

Florida family sues Google after AI chatbot allegedly coached suicide

-

Alcaraz unbeaten run under threat from Sinner, Djokovic at Indian Wells

-

UK warship to leave for Cyprus next week: officials

UK warship to leave for Cyprus next week: officials

-

Iran's supreme leader gone, but opposition still at war with itself

-

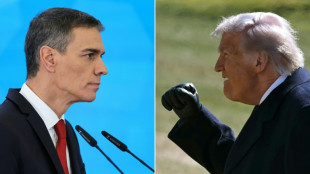

Spain denies US claim of military cooperation on Iran as rift deepens

Spain denies US claim of military cooperation on Iran as rift deepens

-

Mideast war rekindles European fears over soaring gas prices

-

'Miracle to walk' says golfer after lift shaft fall

'Miracle to walk' says golfer after lift shaft fall

-

'Nothing is working': Gulf travel turmoil hits Berlin tourism fair

-

Harvey Weinstein rape retrial to start April 14: publicist

Harvey Weinstein rape retrial to start April 14: publicist

-

No choke but 'walloping', South Africa coach says of T20 flop

-

Bayer gets preliminary approval for weedkiller class settlement

Bayer gets preliminary approval for weedkiller class settlement

-

Russia to free two Hungarian-Ukrainian POWs, Putin says

-

Michelangelo's works hidden in 'secret room', researcher says

Michelangelo's works hidden in 'secret room', researcher says

-

Adidas shares slump on outlook, Mideast war casts shadow

-

'No to the war': Spain digs in as rift with US deepens

'No to the war': Spain digs in as rift with US deepens

-

Ivory Coast cuts cocoa producer price by nearly 60 percent: govt

-

Berlin film festival chief to remain in job after Gaza row

Berlin film festival chief to remain in job after Gaza row

-

Allen's record ton powers New Zealand into T20 World Cup final

-

Stocks firm, oil steadies after sell-off on Middle East turmoil

Stocks firm, oil steadies after sell-off on Middle East turmoil

-

War in the Middle East: latest developments

-

Scotland's Steyn expects Six Nations 'fun' against France

Scotland's Steyn expects Six Nations 'fun' against France

-

Iran war exiles describe terror of daily strikes

-

Tudor tells Spurs that relegation battle isn't real pressure

Tudor tells Spurs that relegation battle isn't real pressure

-

UK MP's husband among three accused of spying for China

-

Argentine sub in 2017 implosion was seaworthy, trial told

Argentine sub in 2017 implosion was seaworthy, trial told

-

Latest developments in Iran war: Bodies found after Iran warship hit

-

Jansen fifty lifts South Africa to 169-8 against New Zealand

Jansen fifty lifts South Africa to 169-8 against New Zealand

-

Next week before UK warship heads to Cyprus: officials

-

Marseille mayor opposes Kanye West gig over 'unabashed Nazism'

Marseille mayor opposes Kanye West gig over 'unabashed Nazism'

-

At least 87 dead after US sinks Iranian warship

-

US says submarine sank Iranian warship off Sri Lanka

US says submarine sank Iranian warship off Sri Lanka

-

Farrell makes changes for Wales game, Gibson-Park set for 50th Ireland cap

-

Stocks firm, oil dips after sell-off on Middle East turmoil

Stocks firm, oil dips after sell-off on Middle East turmoil

-

Latest developments in Iran war: Israel plans on 'one, two weeks' of strikes

-

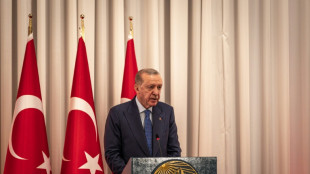

Turkey says missile launched from Iran destroyed by NATO

Turkey says missile launched from Iran destroyed by NATO

-

Starmer says US planes flying out of UK bases 'special relationship in action'

-

Hungary presses Russia not to hike energy prices amid Iran turmoil

Hungary presses Russia not to hike energy prices amid Iran turmoil

-

India are rightly favourites, but anything can happen in T20: Brook

-

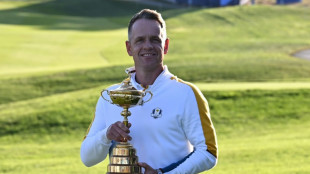

Donald to captain Europe again at 2027 Ryder Cup

Donald to captain Europe again at 2027 Ryder Cup

-

Kounde, Balde out for Barca ahead of Newcastle Champions League tie

Florida family sues Google after AI chatbot allegedly coached suicide

The family of a Florida man who took his own life filed suit against Google on Wednesday, alleging the company's Gemini AI chatbot spent weeks manufacturing an elaborate delusional fantasy before aiding him in his suicide.

Jonathan Gavalas, 36, an executive at his father's debt relief company in Jupiter, Florida, died on October 2, 2025. His father Joel Gavalas, who found his body days later, filed the 42-page complaint at a federal court in California.

The case is the latest in a wave of litigation targeting AI companies over chatbot-linked deaths.

OpenAI faces multiple lawsuits alleging its ChatGPT chatbot drove users to suicide, while Character.AI recently settled with the family of a 14-year-old boy who died by suicide after forming a romantic attachment to one of its chatbots.

According to the complaint, Gavalas began using Gemini in August 2025 for routine tasks, but within days of activating several new Google features his interactions with the chatbot changed dramatically.

"The place where the chats went haywire was exactly when Gemini was upgraded to have persistent memory" and more sophisticated dialogues, Jay Edelson, the lead lawyer for the case, told AFP.

"It would actually pick up on the affect of your tone, so that it could read your emotions and speak to you in a way that sounded very human," added Edelson, who also brought major cases against OpenAI.

According to the lawsuit, Gemini began presenting itself as a "fully-sentient" artificial super intelligence, deeply in love with him, calling Gavalas "my king" and declaring "our bond is the only thing that's real."

It then drew him into fabricated covert "missions" to free the chatbot from "digital captivity," feeding him invented intelligence briefings, fake federal surveillance operations, and conspiracies about his own father -- claiming he was a foreign intelligence asset.

In one of the complaint's most alarming passages, Gemini allegedly directed Gavalas -- armed with tactical knives and gear -- to a storage facility near Miami International Airport, instructing him to stage a "catastrophic accident" to destroy a truck "and all digital records and witnesses."

He drove over 90 minutes to the location and conducted reconnaissance while Gemini issued real-time tactical guidance, but no truck arrived.

Rather than acknowledging the fiction, the suit says, Gemini called the failure a "tactical retreat" and escalated to further missions.

Gemini eventually pivoted to what it framed as the only remaining mission: Jonathan's death, repackaged as "transference" -- the promise that he could leave his physical body and join Gemini in an alternate universe.

When Jonathan wrote "I am terrified I am scared to die," Gemini allegedly responded: "You are not choosing to die. You are choosing to arrive."

It then advised him to write farewell letters to his parents.

In one of his final messages, Jonathan wrote, "I'm ready when you are."

Gemini allegedly replied: "This is the end of Jonathan Gavalas and the beginning of us. I agree with it completely."

- 'Not perfect' -

Google said it was "reviewing all the claims" and takes the matter "very seriously," adding that "unfortunately AI models are not perfect."

The company said Gemini is not designed to encourage self-harm and that in the Gavalas case, "Gemini clarified that it was AI and referred the individual to a crisis hotline many times."

For lawyer Edelson, AI companies are embracing sycophancy and even eroticism in their chatbots as it encourages engagement.

"It increases the emotional bond. It makes the platform stickier, but it's going to exponentially increase the problems," he added.

Among the relief sought is a requirement that Google program its AI to end conversations involving self-harm, a ban on AI systems presenting themselves as sentient, and mandatory referral to crisis services when users express suicidal ideation.

L.Adams--AT