-

Slot admits Liverpool in 'survival mode' in PSG defeat

Slot admits Liverpool in 'survival mode' in PSG defeat

-

Trump makes up with Sahel juntas, with eye on US interests

-

Tiger Woods drug records to be subpoenaed by prosecutors

Tiger Woods drug records to be subpoenaed by prosecutors

-

England's Rai wins Par-3 Contest to risk Masters curse

-

Brazil's Chief Raoni backs Lula in elections

Brazil's Chief Raoni backs Lula in elections

-

Trump to discuss leaving NATO in meeting with Rutte

-

Atletico punish 10-man Barcelona, take control of Champions League tie

Atletico punish 10-man Barcelona, take control of Champions League tie

-

Dominant PSG leave Liverpool right up against it in Champions League tie

-

Meta releases first new AI model since shaking up team

Meta releases first new AI model since shaking up team

-

Tehran residents relieved but divided by Trump truce

-

Vance says up to Iran if it wants truce to 'fall apart' over Lebanon

Vance says up to Iran if it wants truce to 'fall apart' over Lebanon

-

US, Iran truce hangs in balance as war flares in Lebanon

-

Scale of killing in Lebanon 'horrific': UN rights chief

Scale of killing in Lebanon 'horrific': UN rights chief

-

'Ketamine Queen' jailed for 15 years over Matthew Perry drugs

-

Betis earn draw in Europa League quarter-final at Braga

Betis earn draw in Europa League quarter-final at Braga

-

Buttler hits form with IPL fifty as Gujarat win last-ball thriller

-

'Total victory' or TACO? Trump faces questions on Iran deal

'Total victory' or TACO? Trump faces questions on Iran deal

-

Medvedev thrashed at Monte Carlo as Zverev battles through

-

Trump to discuss leaving NATO in meeting with Rutte: White House

Trump to discuss leaving NATO in meeting with Rutte: White House

-

Five US multiple major champions seek first Masters win

-

Howell got McIlroy ball as kid and now joins him at Masters

Howell got McIlroy ball as kid and now joins him at Masters

-

Turkey puts 11 on trial for LGBT 'obscenity'

-

Augusta boss eyes tradition and innovation balance at Masters

Augusta boss eyes tradition and innovation balance at Masters

-

In Trump war on Iran, tactical wins and long-term damage to US

-

Argentine MPs to debate watered-down glaciers protection

Argentine MPs to debate watered-down glaciers protection

-

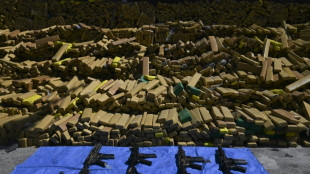

Brazilian police dog sniffs out 48 tons of marijuana in record bust

-

Leicester close to third tier after points deduction appeal dismissed

Leicester close to third tier after points deduction appeal dismissed

-

In the heart of Beirut, buildings in flames and charred cars

-

Dilemma over crossings as fate of Hormuz ships remains uncertain

Dilemma over crossings as fate of Hormuz ships remains uncertain

-

Laurance 'becomes someone else' to nab Tour of the Basque Country stage win

-

Mediators to 'fragile' US-Iran truce urge restraint as violations reported

Mediators to 'fragile' US-Iran truce urge restraint as violations reported

-

Laurance pips Arrieta to Tour of the Basque Country third stage win

-

US, Iran ceasefire sees Israel's war goals left hanging

US, Iran ceasefire sees Israel's war goals left hanging

-

'Unfinished business': Opponents anxious, bitter after Iran ceasefire

-

Dutch minister says not planning to bar Kanye West

Dutch minister says not planning to bar Kanye West

-

France unveils rearmament boost to face Russia threat

-

Suspect remains silent in Swiss bar fire probe

Suspect remains silent in Swiss bar fire probe

-

Italy great Parisse appointed Azzurri forwards coach

-

Iran truce spurs hopes for world economy, but recovery will be rocky

Iran truce spurs hopes for world economy, but recovery will be rocky

-

BAFTA racial slur was breach of BBC editorial standards: internal probe

-

Red or black: Thai men tempt fate at military draft draw

Red or black: Thai men tempt fate at military draft draw

-

CAF president visits Dakar following AFCON trophy reversal

-

Medvedev thrashed 6-0, 6-0 by Berrettini in Monte Carlo

Medvedev thrashed 6-0, 6-0 by Berrettini in Monte Carlo

-

Australia's O'Callaghan sets sights on Titmus's 200m freestyle world record

-

Oil prices plunge, stocks surge on US-Iran ceasefire

Oil prices plunge, stocks surge on US-Iran ceasefire

-

Researchers unmask trade in nude images on Telegram

-

Warner aware of 'seriousness' of drink-driving charges: Cricket NSW

Warner aware of 'seriousness' of drink-driving charges: Cricket NSW

-

Indian hit movie 'Dhurandhar' breaks Bollywood records

-

Australia PM welcomes Iran ceasefire, says Trump threats not 'appropriate'

Australia PM welcomes Iran ceasefire, says Trump threats not 'appropriate'

-

Nigeria sweats in heatwave as Iran war drives up costs to stay cool

Is AI's meteoric rise beginning to slow?

A quietly growing belief in Silicon Valley could have immense implications: the breakthroughs from large AI models -– the ones expected to bring human-level artificial intelligence in the near future –- may be slowing down.

Since the frenzied launch of ChatGPT two years ago, AI believers have maintained that improvements in generative AI would accelerate exponentially as tech giants kept adding fuel to the fire in the form of data for training and computing muscle.

The reasoning was that delivering on the technology's promise was simply a matter of resources –- pour in enough computing power and data, and artificial general intelligence (AGI) would emerge, capable of matching or exceeding human-level performance.

Progress was advancing at such a rapid pace that leading industry figures, including Elon Musk, called for a moratorium on AI research.

Yet the major tech companies, including Musk's own, pressed forward, spending tens of billions of dollars to avoid falling behind.

OpenAI, ChatGPT's Microsoft-backed creator, recently raised $6.6 billion to fund further advances.

xAI, Musk's AI company, is in the process of raising $6 billion, according to CNBC, to buy 100,000 Nvidia chips, the cutting-edge electronic components that power the big models.

However, there appears to be problems on the road to AGI.

Industry insiders are beginning to acknowledge that large language models (LLMs) aren't scaling endlessly higher at breakneck speed when pumped with more power and data.

Despite the massive investments, performance improvements are showing signs of plateauing.

"Sky-high valuations of companies like OpenAI and Microsoft are largely based on the notion that LLMs will, with continued scaling, become artificial general intelligence," said AI expert and frequent critic Gary Marcus. "As I have always warned, that's just a fantasy."

- 'No wall' -

One fundamental challenge is the finite amount of language-based data available for AI training.

According to Scott Stevenson, CEO of AI legal tasks firm Spellbook, who works with OpenAI and other providers, relying on language data alone for scaling is destined to hit a wall.

"Some of the labs out there were way too focused on just feeding in more language, thinking it's just going to keep getting smarter," Stevenson explained.

Sasha Luccioni, researcher and AI lead at startup Hugging Face, argues a stall in progress was predictable given companies' focus on size rather than purpose in model development.

"The pursuit of AGI has always been unrealistic, and the 'bigger is better' approach to AI was bound to hit a limit eventually -- and I think this is what we're seeing here," she told AFP.

The AI industry contests these interpretations, maintaining that progress toward human-level AI is unpredictable.

"There is no wall," OpenAI CEO Sam Altman posted Thursday on X, without elaboration.

Anthropic's CEO Dario Amodei, whose company develops the Claude chatbot in partnership with Amazon, remains bullish: "If you just eyeball the rate at which these capabilities are increasing, it does make you think that we'll get there by 2026 or 2027."

- Time to think -

Nevertheless, OpenAI has delayed the release of the awaited successor to GPT-4, the model that powers ChatGPT, because its increase in capability is below expectations, according to sources quoted by The Information.

Now, the company is focusing on using its existing capabilities more efficiently.

This shift in strategy is reflected in their recent o1 model, designed to provide more accurate answers through improved reasoning rather than increased training data.

Stevenson said an OpenAI shift to teaching its model to "spend more time thinking rather than responding" has led to "radical improvements".

He likened the AI advent to the discovery of fire. Rather than tossing on more fuel in the form of data and computer power, it is time to harness the breakthrough for specific tasks.

Stanford University professor Walter De Brouwer likens advanced LLMs to students transitioning from high school to university: "The AI baby was a chatbot which did a lot of improv'" and was prone to mistakes, he noted.

"The homo sapiens approach of thinking before leaping is coming," he added.

D.Lopez--AT